Crawlkit

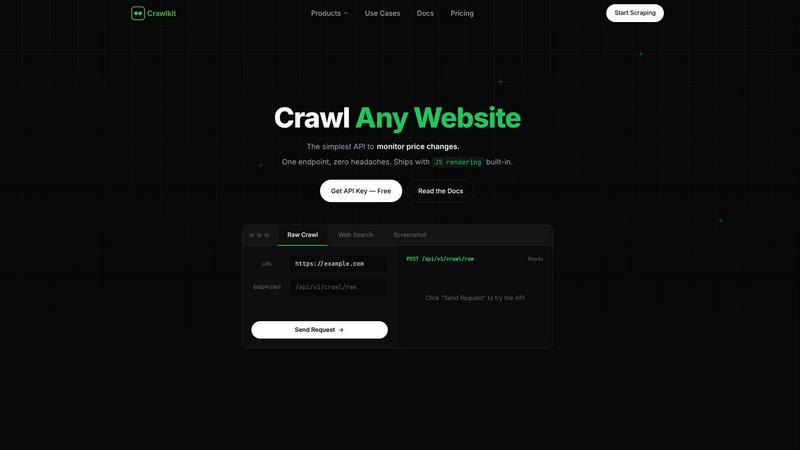

CrawlKit is the reliable API platform that turns any website into structured data with a single call.

About Crawlkit

Crawlkit is a sophisticated web data extraction platform engineered specifically for developers and data teams. It addresses the core frustration of modern web scraping: the immense operational overhead. Instead of wrestling with rotating proxies, headless browsers, CAPTCHAs, and rate limits, Crawlkit provides a single, reliable API that abstracts away this complexity. The platform is built on a robust infrastructure designed for high success rates and scalability, ensuring consistent data access even as target websites update their anti-bot protections. Its primary value proposition is enabling users to focus entirely on data analysis and application logic, not on building and maintaining fragile scraping infrastructure. With a developer-first philosophy, it offers a simple REST API and SDKs for popular languages, making it accessible for projects of any scale, from one-off data pulls to enterprise-level data pipelines. From a business perspective, it turns the variable cost and risk of in-house scraping into a predictable, fixed-cost utility. For the data engineer, it eliminates the endless cycle of parser maintenance and infrastructure tuning, offering a "set it and forget it" solution for reliable data ingestion.

Features of Crawlkit

Unified API for Diverse Sources

Crawlkit consolidates access to a wide array of data sources under one simple API. Whether you need data from LinkedIn company pages, Instagram profiles, Google Search results, or mobile app stores, you use the same consistent request format and authentication. This eliminates the need to integrate and manage multiple specialized scraping tools or APIs, drastically simplifying your data stack and reducing code complexity.

Robust Anti-Bot Infrastructure Abstraction

The platform's core engineering magic lies in its handling of the hardest parts of web scraping. It automatically manages rotating proxies, executes JavaScript for single-page applications, bypasses CAPTCHAs, and respects dynamic rate limits. This infrastructure is continuously updated against evolving anti-bot measures, guaranteeing high success rates and delivering complete, rendered page data without requiring any developer intervention or custom configuration.

Developer-First SDKs and Playground

Crawlkit is built for seamless integration into developer workflows. It provides official SDKs for popular languages like Node.js, allowing you to start fetching structured data in minutes with just a few lines of code. The interactive Playground lets you test API endpoints in real-time, see the parsed JSON output, and generate ready-to-use code snippets, making prototyping and debugging incredibly straightforward.

Transparent, Credit-Based Pricing

Crawlkit employs a clear, pay-as-you-go pricing model based on credits. Each API endpoint has a fixed credit cost, and you only pay for successful requests. Credits never expire, there are no monthly commitments or hidden fees, and volume discounts apply automatically. This model provides predictable costs, aligns spending directly with value received, and offers flexibility for projects of any scale without lock-in.

Use Cases of Crawlkit

CRM and Sales Intelligence Enrichment

Automatically enrich lead and contact records in your CRM with fresh, structured data. Use the LinkedIn API to pull job titles, company information, and professional details. This transforms manual research into an automated pipeline, enabling sales teams to personalize outreach at scale and gain deeper insights into their prospects directly within their existing tools.

Competitive and Market Intelligence

Systematically track competitors' digital footprints. Monitor their Instagram for follower growth and engagement trends, scrape their app store reviews for user sentiment analysis, or track their public job postings on LinkedIn to infer hiring strategies. Crawlkit turns sporadic manual checks into structured, historical datasets for strategic analysis.

Social Media Monitoring and Analytics

Build dashboards to track brand mentions, campaign performance, or industry trends across the web. Use the Search API to gather news and forum mentions, while the Instagram API provides precise metrics on public profiles. This enables marketing teams to measure ROI, understand audience sentiment, and identify influencers or trending content.

Product and Review Aggregation

Create a comprehensive view of your product's reputation by aggregating user reviews from multiple app stores (Play Store, App Store). Analyze competitor products by scraping their detail pages and review sections. This data is crucial for product managers to prioritize feature development, understand user pain points, and conduct competitive benchmarking.

Frequently Asked Questions

What happens if a request fails?

Crawlkit operates on a "refund on failure" policy. If an API request fails to return the requested structured data, the credits spent on that request are automatically refunded to your account. The platform's infrastructure is designed for high reliability, but this guarantee ensures you only pay for successful, usable data.

Do credits expire?

No, purchased credits never expire. You can buy a bulk pack of credits and use them at your own pace, whether over weeks, months, or years. This provides maximum flexibility and eliminates pressure to use the service within a specific billing cycle, making it ideal for both sporadic and continuous data needs.

Can I request a new data source or API?

Yes. Crawlkit encourages users to talk to them about needed APIs that are not yet available. They have a policy of building custom data source APIs based on user demand. This means the platform can evolve to meet your specific project requirements, extending its value beyond the currently listed integrations.

How is Crawlkit different from building my own scraper?

Building your own scraper involves significant hidden costs: ongoing maintenance of parsers, managing proxy networks, solving CAPTCHAs, handling rate limits, and ensuring scalability. Crawlkit abstracts all this operational burden. You trade variable engineering time and infrastructure cost for a fixed, predictable API cost, allowing your team to focus on deriving insights from data, not collecting it.

Pricing of Crawlkit

Crawlkit uses a simple, credit-based pricing model. You start with 100 free credits. When you need more, you purchase credit packs which provide volume discounts. For example, 25,000 credits are offered at a one-time cost. There are no monthly subscriptions or minimum spends. Each API endpoint costs a fixed number of credits per successful call (e.g., 1 credit for an Instagram profile, 2 for a LinkedIn profile). You only pay for what you use, and unused credits never expire. This transparent approach ensures predictable costs and flexibility for all project scales.

Explore more in this category:

Similar to Crawlkit

Subiq

Subiq simplifies SaaS subscription management for small teams, helping you track tools, manage spending, and avoid costly renewals.

Toon Tone

Toon Tone is a free daily game where you match original cartoon colors using HSB sliders, enhancing your memory and color recognition skills.

FX Radar

FX Radar delivers lightning-fast market news and insights, empowering traders to make informed decisions with clarity and confidence.

GhostlyX Privacy-First Web Analytics

GhostlyX delivers essential analytics without cookies or tracking, respecting visitor privacy while providing clear, actionable insights for your.

Microplastic Intake App

Deplasto's research-backed app empowers you to measure, track, and avoid microplastic intake from your daily foods and drinks.

Webleadr

Webleadr helps you effortlessly find and contact businesses without websites, turning leads into clients in just a few clicks.

TubeAnalytics

TubeAnalytics empowers YouTube creators with AI-driven insights to optimize channel growth and maximize revenue effectively.

Metric Nexus

Metric Nexus centralizes your marketing data, allowing you to easily query performance insights using plain English with Claude.