Agenta vs OpenMark AI

Side-by-side comparison to help you choose the right AI tool.

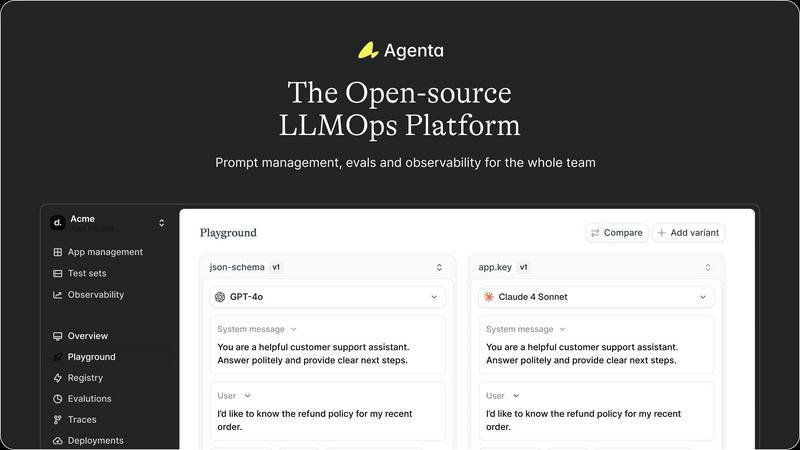

Agenta is the open-source platform for teams to collaboratively build and manage reliable LLM applications.

Last updated: March 1, 2026

OpenMark AI benchmarks over 100 LLMs on your specific task for cost, speed, and quality with no API keys or setup required.

Last updated: March 26, 2026

Visual Comparison

Agenta

OpenMark AI

Feature Comparison

Agenta

Unified Playground & Versioning

Agenta provides a centralized playground where teams can experiment with and compare different prompts and models side-by-side in real-time. Every change is automatically versioned, creating a complete audit trail. This eliminates the chaos of managing prompts across disparate documents and ensures that every iteration is tracked, reproducible, and can be easily reverted or analyzed, providing a solid foundation for collaborative development.

Comprehensive Evaluation Suite

The platform replaces guesswork with evidence through a robust evaluation framework. Teams can create systematic processes to validate changes using LLM-as-a-judge, custom code evaluators, or built-in metrics. Crucially, Agenta allows evaluation of full agentic traces, testing each intermediate reasoning step, not just the final output. It also seamlessly integrates human evaluation, enabling domain experts to provide qualitative feedback directly within the workflow.

Production Observability & Debugging

Agenta offers deep observability by tracing every LLM request in production. When errors occur, teams can pinpoint the exact failure point in complex chains or agentic workflows. Traces can be annotated collaboratively and, with a single click, turned into test cases to close the feedback loop. This transforms debugging from a speculative exercise into a precise, data-driven process and helps monitor performance for regressions.

Collaborative, Model-Agnostic Infrastructure

Designed for cross-functional teams, Agenta breaks down silos between developers, PMs, and experts. It provides full parity between its UI and API, integrating programmatic and visual workflows into one hub. The platform is model-agnostic, supporting any provider (OpenAI, Anthropic, etc.) and framework (LangChain, LlamaIndex), preventing vendor lock-in and allowing teams to freely use the best model for each task.

OpenMark AI

Plain Language Task Benchmarking

The core of OpenMark AI is its intuitive, code-free interface. Users simply describe the task they want to test in natural language, such as "extract product names and prices from this customer email" or "generate a summary of this technical document." The platform handles the complexity of prompt formatting and execution across all supported models, making advanced benchmarking accessible to developers and product managers alike without requiring deep technical setup or scripting.

Multi-Model Comparison with Real API Data

OpenMark AI provides genuine, side-by-side comparisons by making live API calls to each model in its extensive catalog. This ensures you see real performance metrics—actual cost, true latency, and authentic output quality—rather than relying on cached or idealized marketing numbers from model providers. This real-world testing is essential for making informed decisions about which model will perform best in a production environment.

Variance and Stability Analysis

A standout feature is the platform's focus on consistency. Instead of judging a model on a single execution, OpenMark AI runs your task multiple times to measure stability. The results show variance in outputs, latency, and costs, highlighting whether a model is reliably good or just occasionally lucky. This insight is critical for building robust, predictable applications where consistent quality is non-negotiable.

Unified Credit-Based Hosted Platform

OpenMark AI streamlines the benchmarking process by operating on a hosted credit system. Users do not need to supply, manage, or pay directly for individual API keys from OpenAI, Anthropic, Google, or other providers. This removes significant configuration overhead and security concerns, allowing teams to focus purely on evaluating model performance and cost-efficiency across the entire AI ecosystem from one central dashboard.

Use Cases

Agenta

Streamlining Cross-Functional AI Product Development

For teams building customer-facing LLM applications, Agenta unites developers, product managers, and subject matter experts on a single platform. PMs can define test sets and success criteria, experts can refine prompts and provide human feedback via the UI, and developers can implement complex agentic logic—all while maintaining a shared version history and evidence base for every decision, dramatically speeding up the iteration cycle.

Implementing Rigorous LLM Evaluation & Benchmarking

Organizations needing to systematically improve AI quality use Agenta to establish a rigorous evaluation pipeline. Teams can run automated A/B tests between prompt versions or model providers, evaluate performance on curated test sets, and combine automated scores with human ratings. This is critical for applications where reliability, safety, or factual accuracy are paramount, ensuring every deployment is backed by data.

Debugging Complex Agentic Systems in Production

When a multi-step AI agent fails in production, traditional logging is insufficient. Agenta's trace observability allows engineers to replay the exact sequence of LLM calls, tool executions, and reasoning steps that led to an error. By saving faulty traces as test cases and experimenting with fixes in the playground, teams can quickly diagnose root causes and deploy validated solutions, reducing mean time to resolution.

Centralizing Prompt Management & Governance

Companies struggling with "prompt sprawl" across Slack, Google Docs, and code repositories use Agenta as their system of record. It centralizes all prompts, their versions, associated evaluations, and performance data. This governance model ensures compliance, enables knowledge sharing, and provides visibility into which prompts are deployed where, turning a management headache into a structured asset.

OpenMark AI

Pre-Deployment Model Selection for New Features

Before integrating an LLM into a new application feature—like a chatbot or content generator—teams can use OpenMark AI to empirically test which model delivers the best quality for their specific prompts at an acceptable cost and latency. This data-driven selection mitigates the risk of shipping with an underperforming or prohibitively expensive model, ensuring a stronger product launch.

Cost Optimization for Existing AI Workflows

For teams already using AI in production, OpenMark AI serves as a powerful optimization tool. By benchmarking their current prompts against newer or alternative models, they can identify opportunities to maintain or improve output quality while significantly reducing monthly API expenses, directly impacting the bottom line.

Validating Model Consistency for Critical Tasks

In applications where reliability is paramount, such as legal document analysis, medical information extraction, or financial data processing, consistency is as important as accuracy. OpenMark AI's stability testing helps teams identify models that produce dependable, low-variance results every time, which is essential for building trust and ensuring compliance in sensitive workflows.

Comparative Research and Development (R&D)

AI researchers and developers exploring new prompting techniques, evaluating emerging models, or building complex agentic systems can use OpenMark AI as a rapid testing ground. It allows for quick iteration and comparison across a broad model landscape to understand nuanced performance differences for tasks like classification, translation, or RAG (Retrieval-Augmented Generation) without operational hassle.

Overview

About Agenta

Agenta is an open-source LLMOps platform engineered to solve the fundamental chaos of modern LLM application development. It acts as a centralized command center for AI teams, bridging the critical gap between rapid experimentation and reliable production deployment. The platform is built for collaborative teams comprising developers, product managers, and subject matter experts who are tired of scattered prompts in Slack, siloed workflows, and the perilous "vibe testing" of changes before shipping. From a developer's perspective, Agenta provides the integrated tooling necessary to implement LLMOps best practices, enabling systematic experimentation with prompts and models, automated evaluations, and deep production observability. For product managers and domain experts, it offers a unified, accessible UI to participate directly in the AI development lifecycle—editing prompts, running evaluations, and providing feedback without writing code. Its core value proposition is transforming unpredictability into a structured, evidence-based process. By offering a single source of truth for the entire LLM lifecycle, Agenta empowers organizations to build, evaluate, debug, and ship AI applications with confidence, moving decisively from guesswork to governance and accelerating the journey from prototype to production.

About OpenMark AI

OpenMark AI is a sophisticated, hosted web application designed to solve a critical and often opaque challenge in modern AI development: choosing the right large language model (LLM) for a specific task. It moves beyond theoretical benchmarks and marketing claims by enabling task-level, real-world performance testing. Developers and product teams describe their unique use case in plain language—be it data extraction, customer support Q&A, or complex agentic workflows—and OpenMark AI executes the same prompt against a vast catalog of over 100 models in a single, unified session. The platform provides a comprehensive, side-by-side comparison based on four pivotal, real-world metrics: the actual cost per API request, latency, scored output quality, and, crucially, stability across repeat runs to reveal performance variance. This approach ensures decisions are based on consistent, reliable data, not a single "lucky" output. By using a credit system, it eliminates the logistical nightmare of configuring and managing separate API keys for OpenAI, Anthropic, Google, and other providers for every comparison. Ultimately, OpenMark AI is built for pre-deployment validation, empowering teams to ship AI features with confidence, knowing they have selected the most cost-efficient and reliable model for their exact needs.

Frequently Asked Questions

Agenta FAQ

Is Agenta truly open-source?

Yes, Agenta is a fully open-source platform. The core codebase is publicly available on GitHub, where developers can review the code, contribute to the project, and self-host the entire platform. This open model ensures transparency, avoids vendor lock-in, and allows the tool to be customized to fit specific organizational needs and integrated deeply into existing infrastructure.

How does Agenta handle collaboration for non-technical team members?

Agenta is specifically designed with a strong UI layer for non-technical participants. Product managers and domain experts can access the playground to safely edit and experiment with prompts without touching code. They can also view evaluation results, compare experiments, and provide human feedback or annotations directly through the web interface, making the AI development process truly collaborative.

Can I use Agenta with any LLM provider or framework?

Absolutely. Agenta is model-agnostic and framework-agnostic. It seamlessly integrates with major providers like OpenAI, Anthropic, Cohere, and open-source models via Ollama or Replicate. It also works with popular development frameworks such as LangChain and LlamaIndex. This flexibility allows teams to choose the best tools for their task and switch providers without overhauling their entire operations platform.

What is the difference between Agenta's evaluation and simple unit testing?

While unit tests check code logic, Agenta's evaluation assesses the probabilistic output of LLMs. It allows you to evaluate the full reasoning trace of an agent, not just the final string output. You can employ LLM-as-a-judge evaluators, custom code checks, and human scoring in a unified workflow. This creates a holistic, systematic process to measure the quality, reliability, and correctness of AI behavior against real-world scenarios.

OpenMark AI FAQ

How does OpenMark AI calculate the quality score for model outputs?

OpenMark AI employs a combination of automated evaluation metrics and, where applicable, can incorporate human-defined rubrics tailored to your specific task. The system analyzes factors like correctness, completeness, adherence to instruction, and coherence. By running multiple iterations, it provides a statistically significant score that reflects the model's true capability, not just a one-off result.

Do I need my own API keys to use OpenMark AI?

No, one of the primary advantages of OpenMark AI is that it operates on a hosted credit system. You purchase credits from OpenMark, and the platform manages all the underlying API calls to the various model providers (OpenAI, Anthropic, Google, etc.) on your behalf. This eliminates the need for you to set up, manage, or fund separate accounts and keys for every service you want to test.

What is the difference between a "task" and a "benchmark" in OpenMark?

A "Task" is your core unit of work: the specific prompt and instructions you want to test, described in plain language. A "Benchmark" is the execution of that task. When you run a benchmark, OpenMark AI takes your defined task, sends it to the selected models (one or many), collects the real API results, and compiles the comparative analysis on cost, latency, quality, and stability.

How does OpenMark AI help with cost efficiency compared to just choosing the cheapest model?

Cost efficiency is about value, not just price. A cheaper model might produce low-quality outputs that require expensive human review or cause user churn. OpenMark AI lets you visualize the trade-off directly. You can see if paying slightly more for a different model yields dramatically better quality or consistency, ultimately saving money on corrections and improving user satisfaction, making it a true measure of total cost of ownership.

Alternatives

Agenta Alternatives

Agenta is an open-source LLMOps platform designed to bring order and collaboration to the development of large language model applications. It serves as a centralized hub for teams to experiment, evaluate, and deploy AI features systematically, moving beyond ad-hoc prompt management and unreliable testing. Users explore alternatives for various reasons. Some require a fully managed, proprietary solution with dedicated support, while others might seek a platform with a narrower focus, such as only production monitoring or only prompt management. Budget, team size, and the need for specific integrations or deployment models also drive the search for different tools. When evaluating an alternative, consider your team's primary pain points. Key factors include the platform's approach to collaborative experimentation, the depth of its evaluation and testing framework, its observability and debugging capabilities for production systems, and whether its licensing and deployment model aligns with your technical and financial constraints.

OpenMark AI Alternatives

OpenMark AI is a developer tool for task-level benchmarking of large language models. It allows teams to test many LLMs simultaneously on their specific use case, comparing real-world metrics like cost, latency, quality, and output stability from actual API calls. Users may explore alternatives for various reasons, such as budget constraints, a need for different feature sets like automated testing integration, or a preference for self-hosted solutions that offer more data control. Some may seek tools with a stronger focus on post-deployment monitoring rather than pre-launch validation. When evaluating other options, consider what truly matters for your project. Key factors include the breadth of supported models, whether the tool uses real API data or estimates, the depth of analysis on cost versus quality, and how it measures output consistency across multiple runs to ensure reliable performance.