Agenta vs diffray

Side-by-side comparison to help you choose the right AI tool.

Agenta is the open-source platform for teams to collaboratively build and manage reliable LLM applications.

Last updated: March 1, 2026

diffray

Diffray's AI agents review code with high accuracy, catching real bugs while drastically reducing false alarms.

Last updated: February 28, 2026

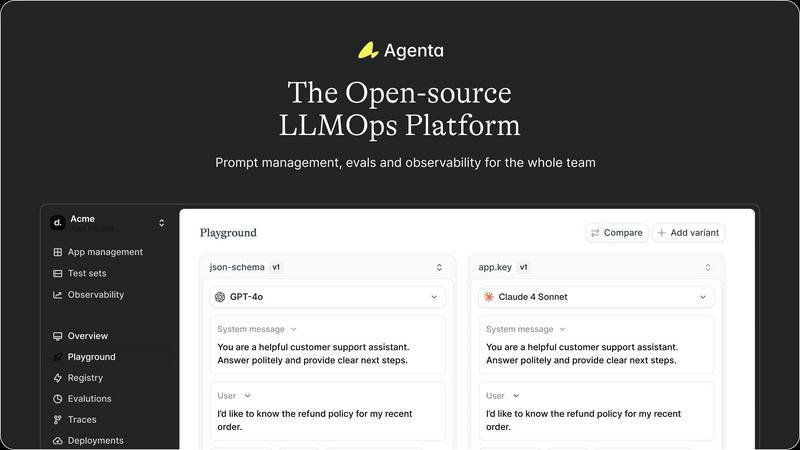

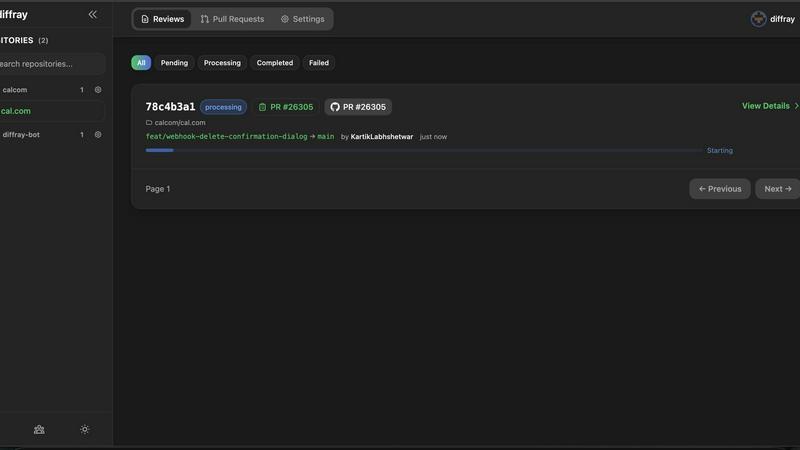

Visual Comparison

Agenta

diffray

Feature Comparison

Agenta

Unified Playground & Versioning

Agenta provides a centralized playground where teams can experiment with and compare different prompts and models side-by-side in real-time. Every change is automatically versioned, creating a complete audit trail. This eliminates the chaos of managing prompts across disparate documents and ensures that every iteration is tracked, reproducible, and can be easily reverted or analyzed, providing a solid foundation for collaborative development.

Comprehensive Evaluation Suite

The platform replaces guesswork with evidence through a robust evaluation framework. Teams can create systematic processes to validate changes using LLM-as-a-judge, custom code evaluators, or built-in metrics. Crucially, Agenta allows evaluation of full agentic traces, testing each intermediate reasoning step, not just the final output. It also seamlessly integrates human evaluation, enabling domain experts to provide qualitative feedback directly within the workflow.

Production Observability & Debugging

Agenta offers deep observability by tracing every LLM request in production. When errors occur, teams can pinpoint the exact failure point in complex chains or agentic workflows. Traces can be annotated collaboratively and, with a single click, turned into test cases to close the feedback loop. This transforms debugging from a speculative exercise into a precise, data-driven process and helps monitor performance for regressions.

Collaborative, Model-Agnostic Infrastructure

Designed for cross-functional teams, Agenta breaks down silos between developers, PMs, and experts. It provides full parity between its UI and API, integrating programmatic and visual workflows into one hub. The platform is model-agnostic, supporting any provider (OpenAI, Anthropic, etc.) and framework (LangChain, LlamaIndex), preventing vendor lock-in and allowing teams to freely use the best model for each task.

diffray

Multi-Agent Specialized Architecture

Unlike monolithic AI tools that use a single model for all tasks, diffray's power stems from its orchestrated fleet of over 30 specialized agents. Each agent is fine-tuned to excel in a specific niche, such as detecting SQL injection vulnerabilities, identifying memory leaks in specific languages, flagging code style deviations, or analyzing front-end code for SEO best practices. This division of labor ensures that every aspect of a code change is examined by an expert, leading to incredibly accurate and context-aware feedback that generic models simply cannot match.

Drastic Noise and False Positive Reduction

A primary pain point in automated code review is the deluge of irrelevant or incorrect warnings. diffray's targeted agent strategy directly combats this, achieving a documented 87% reduction in false positives. By having agents that understand the specific context and rules of their domain, the tool filters out the "noise" that plagues other systems. This means developers spend virtually no time dismissing bogus alerts and can immediately trust and act upon the issues diffray surfaces, streamlining the review workflow significantly.

Comprehensive Issue Detection Matrix

diffray's multi-faceted analysis ensures a 360-degree review of every pull request. The system concurrently checks for security flaws, performance bottlenecks, logical bugs, adherence to coding standards, and maintainability concerns. This holistic approach means that a single PR pass can uncover a wide spectrum of potential problems—from a critical authentication bypass to a simple but costly inefficient loop—that might be missed in a manual review or by a less comprehensive tool, ultimately leading to more robust and higher-quality software.

Seamless Integration and Actionable Feedback

diffray is built for the developer's workflow, integrating directly into popular version control platforms like GitHub and GitLab. It provides clear, concise, and actionable comments directly on the pull request diff. Feedback is not just a generic warning; it often includes explanations of why something is an issue and may suggest concrete fixes or best practice examples. This educational aspect accelerates developer learning and team standardization, turning every code review into a learning opportunity.

Use Cases

Agenta

Streamlining Cross-Functional AI Product Development

For teams building customer-facing LLM applications, Agenta unites developers, product managers, and subject matter experts on a single platform. PMs can define test sets and success criteria, experts can refine prompts and provide human feedback via the UI, and developers can implement complex agentic logic—all while maintaining a shared version history and evidence base for every decision, dramatically speeding up the iteration cycle.

Implementing Rigorous LLM Evaluation & Benchmarking

Organizations needing to systematically improve AI quality use Agenta to establish a rigorous evaluation pipeline. Teams can run automated A/B tests between prompt versions or model providers, evaluate performance on curated test sets, and combine automated scores with human ratings. This is critical for applications where reliability, safety, or factual accuracy are paramount, ensuring every deployment is backed by data.

Debugging Complex Agentic Systems in Production

When a multi-step AI agent fails in production, traditional logging is insufficient. Agenta's trace observability allows engineers to replay the exact sequence of LLM calls, tool executions, and reasoning steps that led to an error. By saving faulty traces as test cases and experimenting with fixes in the playground, teams can quickly diagnose root causes and deploy validated solutions, reducing mean time to resolution.

Centralizing Prompt Management & Governance

Companies struggling with "prompt sprawl" across Slack, Google Docs, and code repositories use Agenta as their system of record. It centralizes all prompts, their versions, associated evaluations, and performance data. This governance model ensures compliance, enables knowledge sharing, and provides visibility into which prompts are deployed where, turning a management headache into a structured asset.

diffray

Accelerating Enterprise Development Cycles

For large organizations with multiple teams and high PR volume, manual review backlogs can cripple velocity. diffray acts as a first-line, expert reviewer that never sleeps. It automatically analyzes every PR, providing immediate, high-quality feedback to authors before human reviewers even begin. This pre-qualification reduces the cognitive load on senior engineers, cuts average review time by over 70%, and allows enterprises to maintain high code quality while shipping features faster.

Onboarding Junior Developers and Enforcing Standards

New team members often struggle with codebase-specific conventions and best practices. diffray serves as an always-available mentor, providing instant feedback on code style, architecture patterns, and potential pitfalls as they write code. This real-time guidance helps juniors learn faster and produce code that aligns with team standards from day one, reducing the review burden on senior developers and improving overall code consistency.

Proactive Security and Compliance Auditing

In regulated industries or for applications handling sensitive data, security cannot be an afterthought. diffray's dedicated security agents continuously scan every code change for vulnerabilities like injection flaws, insecure dependencies, and misconfigurations. This integrates security directly into the development process (shifting it left), enabling teams to identify and remediate risks early, often before the code is even merged, which is far more efficient and secure than post-hoc penetration testing.

Maintaining Code Quality in Fast-Paced Startups

Startup development teams need to move quickly without accruing technical debt. diffray provides the scalable "quality gate" that a small team lacks. It ensures that even under tight deadlines, fundamental best practices, performance considerations, and bug-prone patterns are caught automatically. This allows small, agile teams to maintain a high standard of code health and long-term maintainability without sacrificing their crucial development speed.

Overview

About Agenta

Agenta is an open-source LLMOps platform engineered to solve the fundamental chaos of modern LLM application development. It acts as a centralized command center for AI teams, bridging the critical gap between rapid experimentation and reliable production deployment. The platform is built for collaborative teams comprising developers, product managers, and subject matter experts who are tired of scattered prompts in Slack, siloed workflows, and the perilous "vibe testing" of changes before shipping. From a developer's perspective, Agenta provides the integrated tooling necessary to implement LLMOps best practices, enabling systematic experimentation with prompts and models, automated evaluations, and deep production observability. For product managers and domain experts, it offers a unified, accessible UI to participate directly in the AI development lifecycle—editing prompts, running evaluations, and providing feedback without writing code. Its core value proposition is transforming unpredictability into a structured, evidence-based process. By offering a single source of truth for the entire LLM lifecycle, Agenta empowers organizations to build, evaluate, debug, and ship AI applications with confidence, moving decisively from guesswork to governance and accelerating the journey from prototype to production.

About diffray

In the modern software development lifecycle, the code review process stands as a critical but often time-consuming bottleneck. diffray reimagines this essential practice through the lens of specialized artificial intelligence. It is not merely another AI code reviewer; it is a sophisticated, multi-agent system engineered to dissect pull requests with surgical precision. At its core, diffray addresses the fundamental flaw of generic AI models: overwhelming noise and false positives that frustrate developers and obscure genuine issues. By deploying a dedicated ensemble of over 30 specialized AI agents, each an expert in a distinct domain like security vulnerabilities, performance anti-patterns, bug detection, language-specific best practices, and even SEO considerations for web code, diffray delivers hyper-targeted, actionable feedback. This architectural choice is its primary value proposition, transforming code review from a broad, shallow scan into a deep, multi-faceted analysis. It is designed for development teams of all sizes who seek to enhance code quality, accelerate release cycles, and empower their engineers. The results speak volumes: an 87% reduction in false positives, the identification of three times more genuine issues, and a dramatic cut in average PR review time from 45 to just 12 minutes per week. diffray shifts the developer's role from tedious line-by-line scrutiny to strategic oversight, allowing them to focus on architecture, innovation, and what truly matters in their code.

Frequently Asked Questions

Agenta FAQ

Is Agenta truly open-source?

Yes, Agenta is a fully open-source platform. The core codebase is publicly available on GitHub, where developers can review the code, contribute to the project, and self-host the entire platform. This open model ensures transparency, avoids vendor lock-in, and allows the tool to be customized to fit specific organizational needs and integrated deeply into existing infrastructure.

How does Agenta handle collaboration for non-technical team members?

Agenta is specifically designed with a strong UI layer for non-technical participants. Product managers and domain experts can access the playground to safely edit and experiment with prompts without touching code. They can also view evaluation results, compare experiments, and provide human feedback or annotations directly through the web interface, making the AI development process truly collaborative.

Can I use Agenta with any LLM provider or framework?

Absolutely. Agenta is model-agnostic and framework-agnostic. It seamlessly integrates with major providers like OpenAI, Anthropic, Cohere, and open-source models via Ollama or Replicate. It also works with popular development frameworks such as LangChain and LlamaIndex. This flexibility allows teams to choose the best tools for their task and switch providers without overhauling their entire operations platform.

What is the difference between Agenta's evaluation and simple unit testing?

While unit tests check code logic, Agenta's evaluation assesses the probabilistic output of LLMs. It allows you to evaluate the full reasoning trace of an agent, not just the final string output. You can employ LLM-as-a-judge evaluators, custom code checks, and human scoring in a unified workflow. This creates a holistic, systematic process to measure the quality, reliability, and correctness of AI behavior against real-world scenarios.

diffray FAQ

How is diffray different from other AI code review tools like GitHub Copilot or SonarQube?

diffray's fundamental difference is its multi-agent, specialized architecture. Tools like GitHub Copilot are primarily AI pair programmers focused on code generation, not deep analysis. Traditional static analysis tools like SonarQube often rely on rule-based engines that can generate significant noise. diffray uses multiple, fine-tuned AI models each designed for a specific review domain (security, performance, etc.), resulting in more accurate, context-aware, and actionable feedback with dramatically fewer false positives than these alternatives.

What programming languages and frameworks does diffray support?

diffray is designed to be broad and versatile. Its multi-agent system includes specialists for all major programming languages and popular web frameworks. This includes, but is not limited to, JavaScript/TypeScript (React, Vue, Angular), Python (Django, Flask), Java, C#, Go, Ruby on Rails, and PHP. The specialized agents understand the unique idioms, best practices, and common pitfalls associated with each language and ecosystem.

How does diffray handle the privacy and security of our source code?

Code privacy and security are paramount. diffray can be deployed following strict data handling protocols. Typically, it operates by receiving only the diff (the changed code) from a pull request for analysis, not the entire codebase. Many deployments use secure, encrypted connections, and data retention policies can be configured. It is advisable to review diffray's specific security whitepaper and compliance certifications (like SOC 2) for detailed information on their data protection measures.

Can we customize the rules or feedback provided by diffray's agents?

Yes, diffray is built for adaptability. While its core agents provide expert out-of-the-box analysis, teams can often customize severity levels, ignore specific patterns that are accepted in their codebase, and even define custom rules or guidelines. This ensures that the tool aligns perfectly with your team's specific coding standards and project requirements, making the feedback 100% relevant to your context.

Alternatives

Agenta Alternatives

Agenta is an open-source LLMOps platform designed to bring order and collaboration to the development of large language model applications. It serves as a centralized hub for teams to experiment, evaluate, and deploy AI features systematically, moving beyond ad-hoc prompt management and unreliable testing. Users explore alternatives for various reasons. Some require a fully managed, proprietary solution with dedicated support, while others might seek a platform with a narrower focus, such as only production monitoring or only prompt management. Budget, team size, and the need for specific integrations or deployment models also drive the search for different tools. When evaluating an alternative, consider your team's primary pain points. Key factors include the platform's approach to collaborative experimentation, the depth of its evaluation and testing framework, its observability and debugging capabilities for production systems, and whether its licensing and deployment model aligns with your technical and financial constraints.

diffray Alternatives

diffray is an AI-powered code review tool within the development and DevOps category. It stands out by utilizing a multi-agent architecture to analyze code for security, performance, and best practices, aiming to drastically reduce false positives and review time. Users often explore alternatives for various reasons. These can include budget constraints and specific pricing models, the need for integration with platforms beyond GitHub, or a desire for different feature sets like support for additional programming languages or different reporting interfaces. When evaluating alternatives, key considerations should include the accuracy of the AI and its reduction of false positives, the depth of codebase context and integration capabilities, and the overall clarity and actionability of the feedback provided to developers. The goal is to find a tool that enhances code quality without disrupting the development workflow.