Seedance 2.0 vs Seedance 2.0

Side-by-side comparison to help you choose the right AI tool.

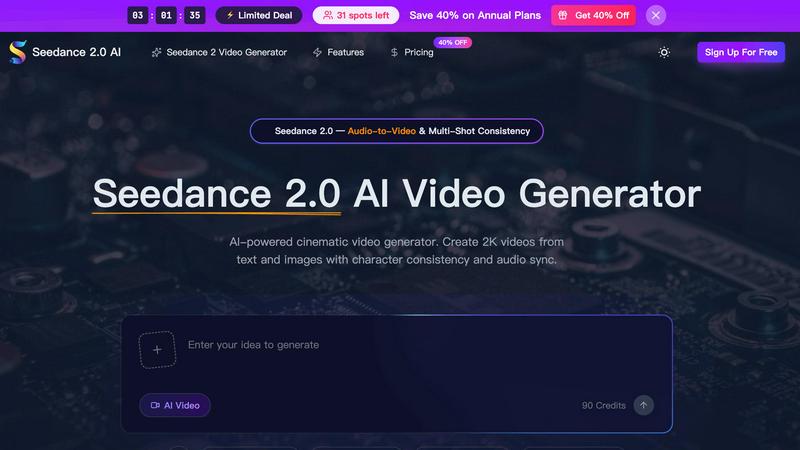

Seedance 2.0 creates cinematic AI videos from text or images with consistent characters and audio sync.

Last updated: February 28, 2026

Seedance 2.0

Seedance 2.0 transforms your text and images into hyper-realistic, professionally stable videos with ease.

Last updated: February 28, 2026

Visual Comparison

Seedance 2.0

Seedance 2.0

Feature Comparison

Seedance 2.0

Cinematic 2K Text-to-Video Generation

Seedance 2.0's foundation is its powerful text-to-video capability, which interprets natural language prompts to generate videos up to 12 seconds long in stunning 2K resolution. Users can describe intricate scenes, specify character actions, and dictate complex camera movements like zooms, pans, and tracking shots. The AI renders these descriptions with a sophisticated understanding of cinematic principles, producing clips with realistic textures, accurate lighting, and natural motion that are immediately suitable for professional broadcast and commercial applications.

Multi-Shot Character Consistency Engine

This is a cornerstone feature that sets Seedance 2.0 apart. Its advanced multi-shot storytelling engine can "lock in" the visual identity of a character—including precise facial features, clothing details, and body proportions—across an unlimited number of different shots and camera angles. This allows creators to build episodic narratives, short films, or branded content series where characters remain visually identical from scene to scene, enabling coherent storytelling that was previously difficult to achieve with AI video tools.

Native Audio Generation & Lip-Sync

Unlike models that generate silent video, Seedance 2.0 produces synchronized audio natively in a single pass. The AI creates a complete soundscape, including ambient Foley effects, background music, and—most impressively—phoneme-accurate lip-sync for dialogue in over 8 languages. This feature also supports an audio-to-video mode, where users can upload their own voiceover, and the AI will animate a character's mouth movements to match the speech with near motion-capture precision, drastically simplifying the production pipeline.

Advanced Image & Video-to-Video Conversion

Beyond text, Seedance 2.0 can animate static images and extend existing video clips. The image-to-video mode breathes life into product photos, concept art, or portraits by adding natural, cinematic motion while meticulously preserving the original composition and fine details. The video extension capabilities allow creators to seamlessly lengthen their generated clips, providing greater flexibility in editing and storytelling without compromising on the consistency of style or quality.

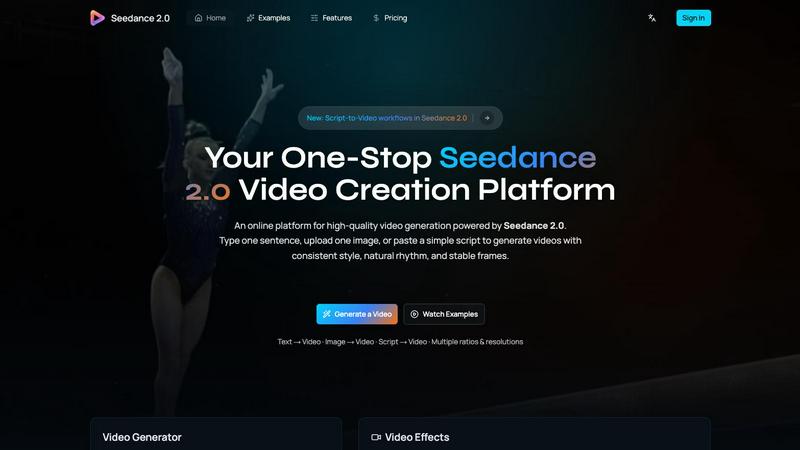

Seedance 2.0

Multimodal Generation Engine

Seedance 2.0 transcends simple text prompts by accepting multiple input modalities. Users can initiate video creation from a single sentence (Text-to-Video), use a reference image to guide composition and style (Image-to-Video), or even provide a script to shape story beats and pacing. This flexibility allows for precise creative control, enabling creators to start from their strongest asset, whether it's a written concept, a visual mood board, or a structured narrative, and see it come to life with coherent motion.

Integrated Audio-Video Synthesis (Pro)

A groundbreaking feature of the Pro version is its ability to generate synchronized video and audio in a single, unified pass. This goes beyond adding a music track; the model creates realistic, context-aware sound effects, dynamic background music, synthesized speech, and even accurate multilingual lip-sync. This holistic approach dramatically simplifies the production workflow, removing the need for separate audio editing software and manual synchronization, resulting in a perfectly cohesive audiovisual experience from the moment of generation.

Physics-Aware Motion Modeling

The model excels at simulating real-world physical dynamics with remarkable accuracy. It understands and renders complex interactions like the natural flutter of cloth in the wind, the fluid physics of water splashes, the turbulent behavior of flames and smoke, and intricate particle effects. This deep comprehension of physical principles ensures that motion within generated videos feels authentic and believable, moving beyond simple animation to capture the subtle nuances of the real world.

Temporal Diffusion Transformer Architecture

The technical backbone of Seedance 2.0 is a novel diffusion transformer built specifically for temporal consistency. Unlike architectures that process frames in isolation, its advanced temporal attention mechanism reuses motion cues across frames. This ensures critical elements like character identity, facial proportions, lighting conditions, and scene geometry remain stable throughout the sequence, resulting in significantly fewer flickers, smoother transitions, and overall more stable and professional-looking video output.

Use Cases

Seedance 2.0

Independent Film & Short Film Production

Aspiring and independent filmmakers can leverage Seedance 2.0 to produce high-concept short films, animated sequences, and proof-of-concept trailers without the need for large crews, actors, or expensive equipment. The multi-shot consistency allows for the creation of recurring characters, while the native audio generation handles dialogue and sound design, enabling solo creators to realize complex visual narratives that were once resource-prohibitive.

Marketing & Advertising Content Creation

Marketing teams and agencies can rapidly prototype and produce commercial advertisements, product demonstration videos, and social media campaign content. The ability to generate a consistent brand spokesperson or animated product across multiple videos ensures cohesive messaging. The 2K professional output means the content is ready for use across digital platforms, television, and online advertising, speeding up time-to-market for campaigns.

Educational & Explainer Video Development

Educators and instructional designers can create engaging animated explainer videos and educational content. Complex concepts can be visualized through custom-generated scenes and characters. The audio-to-video feature is particularly useful for synchronizing narration with animated visuals, making lessons more dynamic and accessible while allowing for easy updates and localization into different languages.

Concept Art & Storyboarding Visualization

Artists, game developers, and writers can use Seedance 2.0 to bring their static concept art, character designs, and storyboards to life. By uploading an image, they can see their creations move and interact in a realistic environment, providing a powerful tool for pitching ideas, testing visual concepts, and creating dynamic portfolios that showcase not just a single moment, but a scene in motion.

Seedance 2.0

Rapid Social Media Content Creation

Marketers and social media managers can leverage Seedance 2.0 to produce a high volume of engaging, platform-optimized video content quickly. By inputting product images or campaign slogans, they can generate eye-catching short clips with consistent branding, dynamic motion, and integrated audio tailored for platforms like TikTok, Instagram Reels, or YouTube Shorts, all without needing extensive video production resources or expertise.

Film and Video Pre-visualization

Filmmakers and directors can use the tool as a powerful pre-visualization (previs) aid. By converting script excerpts or concept art into animated sequences, they can experiment with scene composition, camera angles, lighting moods, and character blocking before expensive live-action shoots begin. This allows for efficient storytelling iteration, better communication of creative vision to crews, and significant savings in time and production costs.

Educational and Explainer Video Production

Educators and corporate trainers can transform complex information into digestible and engaging animated explainer videos. The model's ability to maintain consistent characters and visual style is ideal for serialized educational content, while its physics-aware generation can accurately demonstrate scientific concepts, historical events, or mechanical processes, making learning more interactive and visually compelling.

Prototyping for Game and Animation Studios

Game developers and animation studios can utilize Seedance 2.0 to rapidly prototype character animations, environmental effects, and cinematic cutscenes. The image-to-video function allows them to animate concept art or character sheets, testing movements and styles efficiently. The consistent output helps in maintaining artistic direction during the early creative phases, accelerating the overall development pipeline.

Overview

About Seedance 2.0

Seedance 2.0 represents a paradigm shift in AI-powered video creation, moving beyond simple animation to offer a full-fledged cinematic video generator. At its core, it is an advanced AI model capable of transforming text descriptions, static images, or audio inputs into high-quality, 2K resolution videos. Its groundbreaking V2 motion synthesis engine is designed for creators who demand professional-grade output, enabling the generation of videos with realistic physics, smooth motion, and complex lighting effects. The platform is engineered for a diverse user base, including independent filmmakers, content creators, marketing teams, and product designers who need to produce engaging visual content rapidly and without extensive technical resources. The primary value proposition of Seedance 2.0 lies in its unique combination of multi-shot character consistency and native audio generation, allowing for the creation of coherent, episodic content with synchronized sound in a single, streamlined workflow. This eliminates the traditional barriers of maintaining visual continuity and separate audio post-production, positioning Seedance 2.0 not just as a tool, but as a collaborative AI director for the next generation of digital storytelling.

About Seedance 2.0

Seedance 2.0 represents a paradigm shift in AI-driven content creation, developed by ByteDance's pioneering Seed research team. This isn't just another video generator; it's a comprehensive multimodal production suite designed to transform simple inputs into hyper-realistic, cinematic-quality video sequences. At its core, Seedance 2.0 addresses the most persistent challenges in AI video generation: jarring flicker, inconsistent character identity, and a lack of physical realism. Its primary value proposition lies in delivering unmatched temporal coherence and production-ready output, effectively bringing Hollywood-level fluidity and detail to creators' fingertips. The model is engineered for a broad spectrum of users, from individual content creators and marketers seeking rapid, high-quality video production to filmmakers and studios looking for powerful pre-visualization and asset generation tools. By integrating synchronized audio generation directly into the video creation pipeline, Seedance 2.0, particularly its Pro version, eliminates cumbersome post-production steps, offering a streamlined workflow that is both powerful and accessible. It stands as a formidable competitor in the landscape, distinguishing itself through a physics-aware architecture and a steadfast commitment to visual and narrative consistency.

Frequently Asked Questions

Seedance 2.0 FAQ

What is the maximum video length and resolution Seedance 2.0 can generate?

Seedance 2.0 can generate videos up to 12 seconds in duration at a full 2K HD resolution (2048x1080 pixels). The platform supports various aspect ratios including 16:9, 9:16 for social media, 1:1, and cinematic 21:9, providing flexibility for different distribution channels. The video extension feature can be used to create longer sequences by seamlessly lengthening existing clips.

How does the Multi-Shot Character Consistency work?

The consistency engine uses advanced AI to create a unique, stable "identity signature" for each character you generate based on your initial prompt or uploaded image. Once this signature is established, you can reference the same character in subsequent prompts for different shots, scenes, or actions. The AI will maintain the core visual attributes—like face shape, hair, clothing color, and style—across all generated videos, ensuring the character remains recognizable and consistent.

Can I use my own audio or voiceover with Seedance 2.0?

Yes, absolutely. Seedance 2.0 features a dedicated audio-to-video mode. You can upload your own pre-recorded voiceover, dialogue, or soundtrack. The AI will then generate a video where the character's lip movements and facial expressions are synchronized to match your audio track with high accuracy. This allows for precise narrative control and the use of specific voice talent or branded audio.

What makes Seedance 2.0 different from other AI video generators?

Seedance 2.0 distinguishes itself through two primary, integrated capabilities: true multi-shot character consistency and native, synchronized audio generation. Many competitors focus solely on generating individual video clips without maintaining character identity across scenes or require separate tools for adding sound. Seedance 2.0 combines a professional 2K cinematic output, a 30% faster V2 generation engine, and these unified features into a single platform designed for coherent, production-ready storytelling.

Seedance 2.0 FAQ

What makes Seedance 2.0 different from other AI video models?

Seedance 2.0 distinguishes itself through a multi-faceted approach focused on professional-grade output. Its core differentiators are the novel temporal diffusion transformer architecture for exceptional frame-to-frame coherence, the integrated audio-video generation in the Pro version that creates synchronized sound from the ground up, and a deep, physics-aware motion model that renders dynamic elements like cloth, water, and fire with remarkable realism, reducing the "uncanny valley" effect prevalent in other generators.

Can I control the aspect ratio and resolution of my videos?

Yes, Seedance 2.0 provides flexible output controls to suit various platforms and needs. Users can select from standard aspect ratios including 9:16 (vertical), 1:1 (square), and 16:9 (widescreen). The platform also offers different clarity/resolution options, allowing creators to generate content optimized for everything from social media stories to high-definition presentations, ensuring the final video fits its intended destination perfectly.

How does the 'Fixed Lens' feature work?

The 'Fixed Lens' feature is a stabilization control that instructs the model to keep the camera view static and stable throughout the generated video. When activated, it minimizes simulated camera movement, pans, or zooms, resulting in a shot that feels like it was filmed on a tripod. This is particularly useful for creating product showcases, interview-style clips, or any scene where the focus should remain steadily on the subject and its motion without distracting camera work.

What is required to use the integrated audio generation?

The integrated audio-video synthesis, which generates synchronized sound effects, music, and lip-sync, is a feature of the Seedance 2.0 Pro model. Using this capability typically requires specific credits or is part of a higher-tier subscription plan, as noted in the interface where it "Costs 2x credits." Users need to ensure they have access to the Pro model and sufficient credits to generate videos with this advanced, all-in-one audio feature enabled.

Alternatives

Seedance 2.0 Alternatives

Seedance 2.0 is a powerful AI video generation platform, part of a competitive landscape where creators and businesses seek tools to bring visual stories to life. Users often explore alternatives for various practical reasons, including budget constraints, specific feature requirements not fully met, or a need for a different user experience and integration capabilities with their existing workflow. When evaluating other options, it's crucial to look beyond surface-level claims. Key considerations should include the underlying model's architecture and scale, which directly impact output quality and creativity. The efficiency of operation, especially for generating longer or complex scenes, is vital for practical use. Furthermore, the breadth of capabilities, such as supporting multi-modal tasks or offering extensive language support, can determine how versatile a tool is for diverse projects. --- FAQ_SEPARATOR--- [

{"question": "What is Seedance 2.0?", "answer": "Seedance 2.0, powered by the GLM 5 model, is a next-generation AI platform specializing in high-quality video generation, alongside advanced chat and image creation capabilities."},

{"question": "Who is Seedance 2.0 for?", "answer": "It is designed for developers, researchers, and businesses seeking a powerful, versatile AI tool for complex creative, analytical, and agentic applications requiring text, image, and video generation."},

{"question": "What are the main features of Seedance 2.0?", "answer": "Key features include a massive 745B parameter Mixture-of-Experts architecture for efficiency, a 128K context window for long-form processing, and state-of-the-art performance in reasoning, coding, and multi-modal generation."},

{"question": "Why choose Seedance 2.0?", "answer": "It offers exceptional performance rivaling leading models, with efficient inference, extensive language support, and is built for real-world, complex applications beyond basic generation tasks."}

]

Seedance 2.0 Alternatives

Seedance 2.0 is a sophisticated AI video generation tool that transforms text prompts or reference images into high-quality, dynamic videos. It belongs to the rapidly evolving category of generative AI video models, distinguished by its focus on producing consistent motion, stable frames, and realistic physics. Users often explore alternatives for a variety of practical reasons. These can include budget constraints, as premium AI video tools often operate on subscription or credit-based models. Others might seek different feature sets, such as specific export formats, more granular control over animation, or platform compatibility that better integrates with their existing workflow. The need for a different user experience or access model can also drive the search. When evaluating other options, key considerations should align with your primary goals. Prioritize the tool's core strength in either text-to-video or image-to-video generation, the realism and coherence of its output, and the flexibility it offers for customization. Also, assess the overall value proposition by weighing its output quality against its cost, ease of use, and any limitations on video length or resolution.