Hostim.dev vs OpenMark AI

Side-by-side comparison to help you choose the right AI tool.

Hostim.dev

Hostim.dev simplifies Docker app deployment with built-in databases on EU-hosted infrastructure for predictable costs.

Last updated: March 1, 2026

OpenMark AI benchmarks over 100 LLMs on your specific task for cost, speed, and quality with no API keys or setup required.

Last updated: March 26, 2026

Visual Comparison

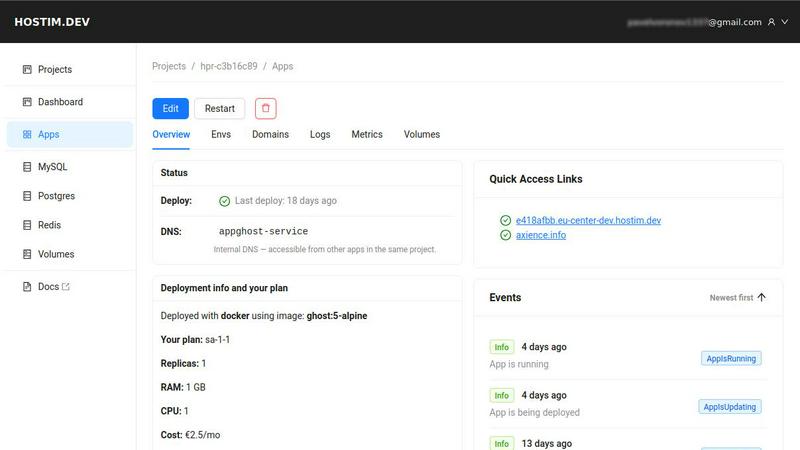

Hostim.dev

OpenMark AI

Feature Comparison

Hostim.dev

Simple Deployment

Hostim.dev simplifies the deployment process by allowing users to launch applications directly from Docker images, Git repositories, or Docker Compose files. This capability eliminates the need for complex configurations and DevOps expertise, enabling developers to go live in just a few minutes.

Managed Databases and Storage

With Hostim.dev, users benefit from instant provisioning of managed databases such as MySQL, PostgreSQL, and Redis, along with persistent storage. This feature means that developers do not have to manage separate database services, streamlining the workflow and reducing setup time.

Secure and Isolated Environments

Every project on Hostim.dev runs in a fully isolated environment, ensuring security and performance. Automatic HTTPS setup, live logs, and metrics contribute to a secure deployment process, while isolation helps prevent interference between different projects.

Transparent Pricing Model

Hostim.dev offers a straightforward pricing structure that starts at €2.50 per month, with no hidden fees. This model allows developers to track costs per project, making it easier to manage budgets and provide clear billing to clients.

OpenMark AI

Plain Language Task Benchmarking

The core of OpenMark AI is its intuitive, code-free interface. Users simply describe the task they want to test in natural language, such as "extract product names and prices from this customer email" or "generate a summary of this technical document." The platform handles the complexity of prompt formatting and execution across all supported models, making advanced benchmarking accessible to developers and product managers alike without requiring deep technical setup or scripting.

Multi-Model Comparison with Real API Data

OpenMark AI provides genuine, side-by-side comparisons by making live API calls to each model in its extensive catalog. This ensures you see real performance metrics—actual cost, true latency, and authentic output quality—rather than relying on cached or idealized marketing numbers from model providers. This real-world testing is essential for making informed decisions about which model will perform best in a production environment.

Variance and Stability Analysis

A standout feature is the platform's focus on consistency. Instead of judging a model on a single execution, OpenMark AI runs your task multiple times to measure stability. The results show variance in outputs, latency, and costs, highlighting whether a model is reliably good or just occasionally lucky. This insight is critical for building robust, predictable applications where consistent quality is non-negotiable.

Unified Credit-Based Hosted Platform

OpenMark AI streamlines the benchmarking process by operating on a hosted credit system. Users do not need to supply, manage, or pay directly for individual API keys from OpenAI, Anthropic, Google, or other providers. This removes significant configuration overhead and security concerns, allowing teams to focus purely on evaluating model performance and cost-efficiency across the entire AI ecosystem from one central dashboard.

Use Cases

Hostim.dev

Freelancers

Freelancers can leverage Hostim.dev to quickly deploy client projects without needing extensive DevOps knowledge. The per-project billing and isolation features enable them to manage multiple clients effortlessly and deliver projects more efficiently.

Agencies

Agencies can utilize Hostim.dev to create isolated environments for each client, ensuring that projects remain separate and secure. The clear cost breakdown per project allows for accurate budgeting and client billing, enhancing financial transparency.

Students

Students can take advantage of Hostim.dev to learn about real-world deployments and database management using Docker. The free trial and student credits provide an opportunity to build a portfolio with tangible projects hosted on a professional platform.

SaaS Builders

SaaS developers can use Hostim.dev to quickly prototype and launch applications while maintaining the flexibility of Docker. The managed services and easy scalability allow them to focus on development rather than infrastructure management, accelerating time to market.

OpenMark AI

Pre-Deployment Model Selection for New Features

Before integrating an LLM into a new application feature—like a chatbot or content generator—teams can use OpenMark AI to empirically test which model delivers the best quality for their specific prompts at an acceptable cost and latency. This data-driven selection mitigates the risk of shipping with an underperforming or prohibitively expensive model, ensuring a stronger product launch.

Cost Optimization for Existing AI Workflows

For teams already using AI in production, OpenMark AI serves as a powerful optimization tool. By benchmarking their current prompts against newer or alternative models, they can identify opportunities to maintain or improve output quality while significantly reducing monthly API expenses, directly impacting the bottom line.

Validating Model Consistency for Critical Tasks

In applications where reliability is paramount, such as legal document analysis, medical information extraction, or financial data processing, consistency is as important as accuracy. OpenMark AI's stability testing helps teams identify models that produce dependable, low-variance results every time, which is essential for building trust and ensuring compliance in sensitive workflows.

Comparative Research and Development (R&D)

AI researchers and developers exploring new prompting techniques, evaluating emerging models, or building complex agentic systems can use OpenMark AI as a rapid testing ground. It allows for quick iteration and comparison across a broad model landscape to understand nuanced performance differences for tasks like classification, translation, or RAG (Retrieval-Augmented Generation) without operational hassle.

Overview

About Hostim.dev

Hostim.dev is an innovative Platform-as-a-Service (PaaS) solution tailored for the containerized era, specifically designed for developers who seek a balance between simplicity and power. It enables users to deploy complex, production-ready applications within minutes, avoiding the extensive traditional DevOps overhead. By allowing deployment from Docker images, Git repositories, or Docker Compose files, Hostim.dev instantly provisions an isolated environment with all necessary components, including managed databases (MySQL, PostgreSQL, Redis), persistent storage, and internal networking. Each project operates in its own Kubernetes namespace on GDPR-compliant bare-metal servers located in Germany, ensuring data protection and privacy. The transparent, per-project hourly billing model makes it ideal for solo developers, startups, agencies, and SaaS builders, allowing them to focus on development without the worry of unexpected costs or vendor lock-in.

About OpenMark AI

OpenMark AI is a sophisticated, hosted web application designed to solve a critical and often opaque challenge in modern AI development: choosing the right large language model (LLM) for a specific task. It moves beyond theoretical benchmarks and marketing claims by enabling task-level, real-world performance testing. Developers and product teams describe their unique use case in plain language—be it data extraction, customer support Q&A, or complex agentic workflows—and OpenMark AI executes the same prompt against a vast catalog of over 100 models in a single, unified session. The platform provides a comprehensive, side-by-side comparison based on four pivotal, real-world metrics: the actual cost per API request, latency, scored output quality, and, crucially, stability across repeat runs to reveal performance variance. This approach ensures decisions are based on consistent, reliable data, not a single "lucky" output. By using a credit system, it eliminates the logistical nightmare of configuring and managing separate API keys for OpenAI, Anthropic, Google, and other providers for every comparison. Ultimately, OpenMark AI is built for pre-deployment validation, empowering teams to ship AI features with confidence, knowing they have selected the most cost-efficient and reliable model for their exact needs.

Frequently Asked Questions

Hostim.dev FAQ

What does the free tier include?

Hostim.dev offers a free 5-day trial that allows users to explore the platform without requiring a credit card. During this period, users can deploy applications and test the features available.

Can I deploy with just a Compose file?

Yes, you can deploy applications by simply pasting your Docker Compose file into Hostim.dev. This feature streamlines the deployment process, making it accessible even for those with limited DevOps experience.

Where is my app hosted?

All applications hosted on Hostim.dev are deployed on bare-metal servers located in Germany. This setup complies with GDPR regulations, ensuring data protection and privacy for European users.

Do I need to know Kubernetes?

No, you do not need to have prior knowledge of Kubernetes to use Hostim.dev. The platform abstracts the complexity of Kubernetes management and allows users to focus on deploying their applications with ease.

OpenMark AI FAQ

How does OpenMark AI calculate the quality score for model outputs?

OpenMark AI employs a combination of automated evaluation metrics and, where applicable, can incorporate human-defined rubrics tailored to your specific task. The system analyzes factors like correctness, completeness, adherence to instruction, and coherence. By running multiple iterations, it provides a statistically significant score that reflects the model's true capability, not just a one-off result.

Do I need my own API keys to use OpenMark AI?

No, one of the primary advantages of OpenMark AI is that it operates on a hosted credit system. You purchase credits from OpenMark, and the platform manages all the underlying API calls to the various model providers (OpenAI, Anthropic, Google, etc.) on your behalf. This eliminates the need for you to set up, manage, or fund separate accounts and keys for every service you want to test.

What is the difference between a "task" and a "benchmark" in OpenMark?

A "Task" is your core unit of work: the specific prompt and instructions you want to test, described in plain language. A "Benchmark" is the execution of that task. When you run a benchmark, OpenMark AI takes your defined task, sends it to the selected models (one or many), collects the real API results, and compiles the comparative analysis on cost, latency, quality, and stability.

How does OpenMark AI help with cost efficiency compared to just choosing the cheapest model?

Cost efficiency is about value, not just price. A cheaper model might produce low-quality outputs that require expensive human review or cause user churn. OpenMark AI lets you visualize the trade-off directly. You can see if paying slightly more for a different model yields dramatically better quality or consistency, ultimately saving money on corrections and improving user satisfaction, making it a true measure of total cost of ownership.

Alternatives

Hostim.dev Alternatives

Hostim.dev is an innovative Platform-as-a-Service (PaaS) solution designed specifically for developers who want to deploy Docker applications effortlessly on a robust, EU-based infrastructure. It streamlines the application deployment process by allowing users to launch complex, production-ready applications in minutes, while bypassing traditional DevOps challenges related to infrastructure and configuration. This ease of use, combined with features like managed databases and automatic provisioning, makes it a compelling choice for many in the tech community. Despite its advantages, users often seek alternatives to Hostim.dev for various reasons, including pricing, specific feature requirements, or compatibility with preferred workflows. When exploring alternatives, it’s essential to consider factors such as ease of deployment, scalability, supported technologies, and overall cost-effectiveness. Evaluating these elements can help developers and teams find a solution that best meets their unique needs and project demands.

OpenMark AI Alternatives

OpenMark AI is a developer tool for task-level benchmarking of large language models. It allows teams to test many LLMs simultaneously on their specific use case, comparing real-world metrics like cost, latency, quality, and output stability from actual API calls. Users may explore alternatives for various reasons, such as budget constraints, a need for different feature sets like automated testing integration, or a preference for self-hosted solutions that offer more data control. Some may seek tools with a stronger focus on post-deployment monitoring rather than pre-launch validation. When evaluating other options, consider what truly matters for your project. Key factors include the breadth of supported models, whether the tool uses real API data or estimates, the depth of analysis on cost versus quality, and how it measures output consistency across multiple runs to ensure reliable performance.