Appkittie vs OpenMark AI

Side-by-side comparison to help you choose the right AI tool.

Appkittie

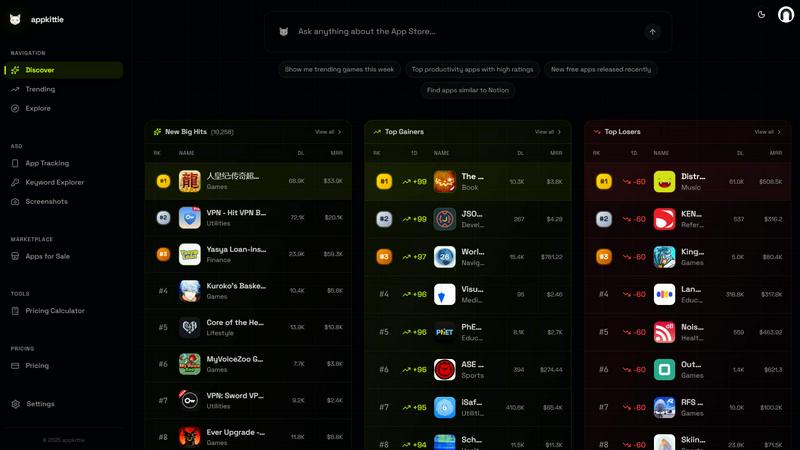

Appkittie helps you discover successful apps, track their growth insights, and replicate winning strategies to boost your own app's success.

Last updated: March 18, 2026

OpenMark AI benchmarks 100+ LLMs on your task: cost, speed, quality & stability. Browser-based; no provider API keys for hosted runs.

Visual Comparison

Appkittie

OpenMark AI

Overview

About Appkittie

Appkittie is a cutting-edge app intelligence platform tailored for founders, indie hackers, and marketers seeking to uncover lucrative app opportunities. In an industry where guessing is often the norm, Appkittie offers a data-driven approach that allows users to analyze a vast database of mobile apps, identifying those that are generating significant revenue and downloads. This analytical capability enables users to validate ideas and understand market demands before investing time and resources.

What sets Appkittie apart is its extensive insights into the successful marketing strategies employed by top-performing apps. By revealing winning advertisements, influential creators on social media platforms like TikTok, and effective campaigns on Apple Search Ads, Appkittie empowers users to replicate proven success patterns. This comprehensive understanding of user acquisition and growth strategies helps avoid wasted efforts on unproven concepts, ultimately facilitating the development of apps that resonate with users. Whether you are exploring startup ideas, validating niche markets, or conducting competitor research, Appkittie equips you with the critical insights necessary for building apps that meet real user needs.

About OpenMark AI

OpenMark AI is a web application for task-level LLM benchmarking. You describe what you want to test in plain language, run the same prompts against many models in one session, and compare cost per request, latency, scored quality, and stability across repeat runs, so you see variance, not a single lucky output.

The product is built for developers and product teams who need to choose or validate a model before shipping an AI feature. Hosted benchmarking uses credits, so you do not need to configure separate OpenAI, Anthropic, or Google API keys for every comparison.

You get side-by-side results with real API calls to models, not cached marketing numbers. Use it when you care about cost efficiency (quality relative to what you pay), not just the cheapest token price on a datasheet.

OpenMark AI supports a large catalog of models and focuses on pre-deployment decisions: which model fits this workflow, at what cost, and whether outputs are consistent when you run the same task again. Free and paid plans are available; details are shown in the in-app billing section.